KV Caching in LLMs: A Guide for Developers - MachineLearningMastery.com

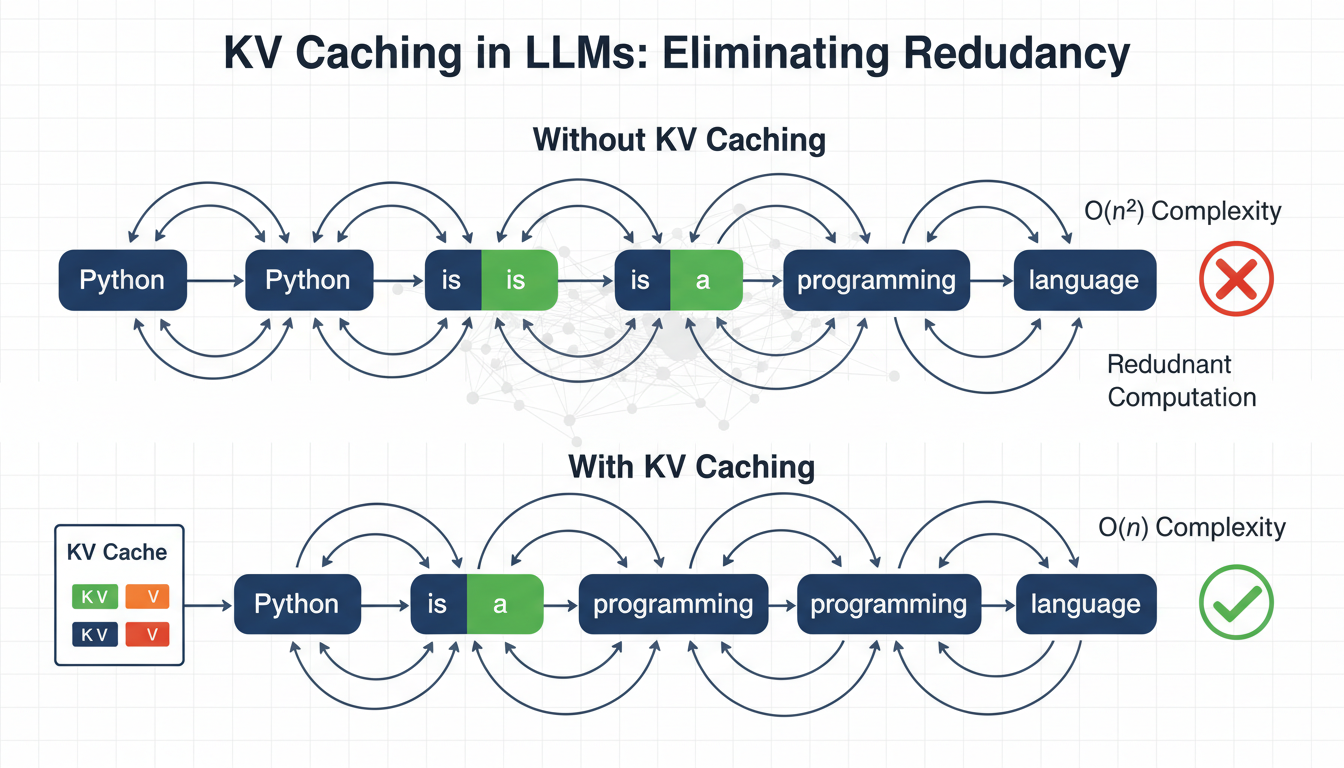

In this article, you will learn how key-value (KV) caching eliminates redundant computation in autoregressive transformer inference to dramatically improve generation speed.

Source: MachineLearningMastery.com

In this article, you will learn how key-value (KV) caching eliminates redundant computation in autoregressive transformer inference to dramatically improve generation speed.